This video is a short documentary about the connection A.H. Reginal Buller had to the history of the University of Manitoba.

Biological Sciences

What we offer

In Fall 2024, our program offerings will be changing.

To learn more about our new program, refer to the Biological Sciences (new program) video. To see how these changes affect you as a current Major/Honours student in the Department of Biological Sciences, refer to the Biological Sciences (transition plan) video.

News and stories

UMToday

View more news and stories-

Winnipeg Free Press: Annual awards celebrate volunteers’ commitment, passion

Faculty of Science, UM Today

-

Insect Friends and Foes

Faculty of Agricultural and Food Sciences, Faculty of Science, Research and International, Students

-

Celebrating Work-Integrated Learning (WIL)

Faculty of Science

-

Witness the spark in science when students compete for $3,000 cash prize!

Faculty of Science

Events

-

Final exam period - Winter Term

Apr26-

12:00 am

-

-

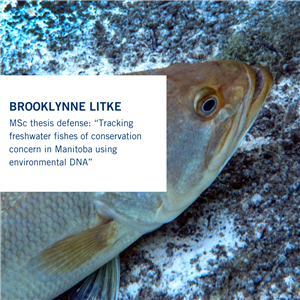

MSc thesis defense: Brooklynne Litke

May1-

10:00 amto12:00 pm

-

-

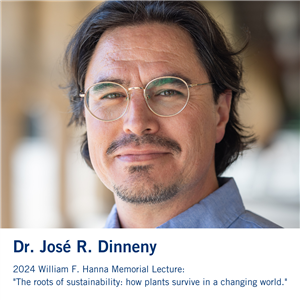

William F. Hanna Memorial Lecture Series: Dr. José R. Dinneny (Stanford University)

May2-

07:00 pmto08:00 pm

-

-

Science Rendezvous

May11-

11:00 amto03:00 pm

-

-

Voluntary withdrawal deadline for Winter/Summer Term classes

May16-

12:00 am

-

Buller

You may also be looking for

Dana Schroeder Memorial Scholarship in Genetics

Dana always encouraged her students to apply for as many scholarships as possible, so the family wanted to set up an award in her memory. These awards will go to a graduate student in Biological Science who is studying genetics. While the family is contributing the initial funds, anyone who wishes to make an additional donation is encouraged to do so.

Permanent awards require a very large initial donation ($25,000), but smaller amounts can be spent out through the Faculty of Science. For example $5000 as a $1000 award over five years, which is closer to the scenario we are expecting.

When you visit the donor page you have the option to enter your name or remain anonymous. The family will receive a list of names for those that were entered and the total amount donated, but Donor Relations never releases individual amounts donated.

Contact us

-

Undergraduate inquiries:

biosciuginquiry@umanitoba.ca -

Graduate inquiries:

biograd.program@umanitoba.ca

Our office

Department of Biological Sciences

212B Biological Sciences Building

50 Sifton Road

University of Manitoba

Winnipeg, Manitoba, R3T 2N2 Canada